The CLI isn't as scary as you think

VanJS

April 16th, 2025

Gavin Mogan

Senior Software Engineer at Digital Ocean.

https://www.gavinmogan.com

Hey everyone,

I'm Gavin. I am a Senior Software Developer at Digital Ocean, currently on the billing team. I've been there

just over 5 years now.

Today I'm planning on covering the basics of the command line and linux, so it becomes a lot less

intimidating. Dont worry about about remembering everything, as this is more about exposing to ideas, and my

slides are downloadable. Don't worry if you know everything about linux already, as I guarantee with no money

back, that you will still learn one thing new. I know this because when I looked at my past presentations, I

learned some things I forgot about.

Upfront notes

Talking about linux. Rest are similar

$ is usually a shell prompt

Generally, Linux doesn't care what order CLI parameters are in.

Linux tools, like npm packages, like to do one thing and do them well.

Okay, a few notes before we get started.

While I know most developers these days, at least front end ones, will end up using an apple laptop, I'm going

to cover linux as I know it the best, and but the apple cli, and windows with wsl, and even to some degrees

powershell all have overlaps so it shouldn't be hard to adapt things.

Firstly, whenever you see a dollar sign followed by a command, its usually to indicate a command prompt. I've

stuck with that convention here.

Upfront linux usually doesn't care about order of arguments, though some older apple versions do. So if

something I cover doesn't work, try switching the order of the arguments.

Classicly linux like node packages, like to try and do one thing well, so you can combine the best tools for

the job.

Getting Help

Offline version

Most commands have a man(ual) pages

$ man find

Shell functions only have help

$ help for

Sometimes --help will return things

$ ls --help

First things first. Getting help. There are a bunch of built in solutions but don't underestimate talking to

other people.

Keyboard Shortcuts

Ctrl +r

Up / Down

Ctrl +w

Ctrl +k

Ctrl +u

Ctrl +a

Ctrl +e

Next up. Some of my fav keyboard shortcuts. They should all work in the default terminal as bash and zsh is in

emacs modes.

The biggest life changer for me was reverse search. Most people get started in the terminal by typing out

everything every time. Then you quickly realize that if you hit up or down, you can go back through previously

typed out commands.

But ctrl+r, thats where speed starts to kick in.

Keyboard Shortcuts

Ctrl +r - Reverse search through your bash history

So on my little contrived demo here, you can see some past commands. Then I hit ctrl+r, and type out npm, to

search back through my history, and run the same command as before. In this case, since its the last command,

its probably easier to just hit up, but for when you have a larger history, this is way easier than trying to

think about how many commands ago you ran something.

There are tools out there to make this even better though.

Where am I?

Okay next up.

You can't really do anything if you don't know where you are. Most terminals will start you off in your home

directory. On linux that is slash home, on apple its slash users, but you can configure it differently. I have

some friends that have different terminal profiles that start them in different directories to make working on

different projects easier. Vscode would be another one that drops you somewhere else, so its worth knowing

where you are.

How to Change Directory

Most OSes uses forward slash - / as folder separator

Spaces and other characters should be escaped or quoted

Now that you know where you are, you need to get to where you want to go.

Just like with urls, there are relative and absolute paths.

If it starts with a slash, its an absolute path.

If it doesn't, then its probably a relative path.

And finally, if your path contains special characters like spaces, you'll want to add backslash, or wrap

everything in quotes.

Get back there

Now that you got in the habit of moving around, its time to go back.

cd minus will get you back to the last directory you are in.

I often used it when I'm working in a large mono repo. I want to switch to a directory, run a command, then go

back to the root of the project.

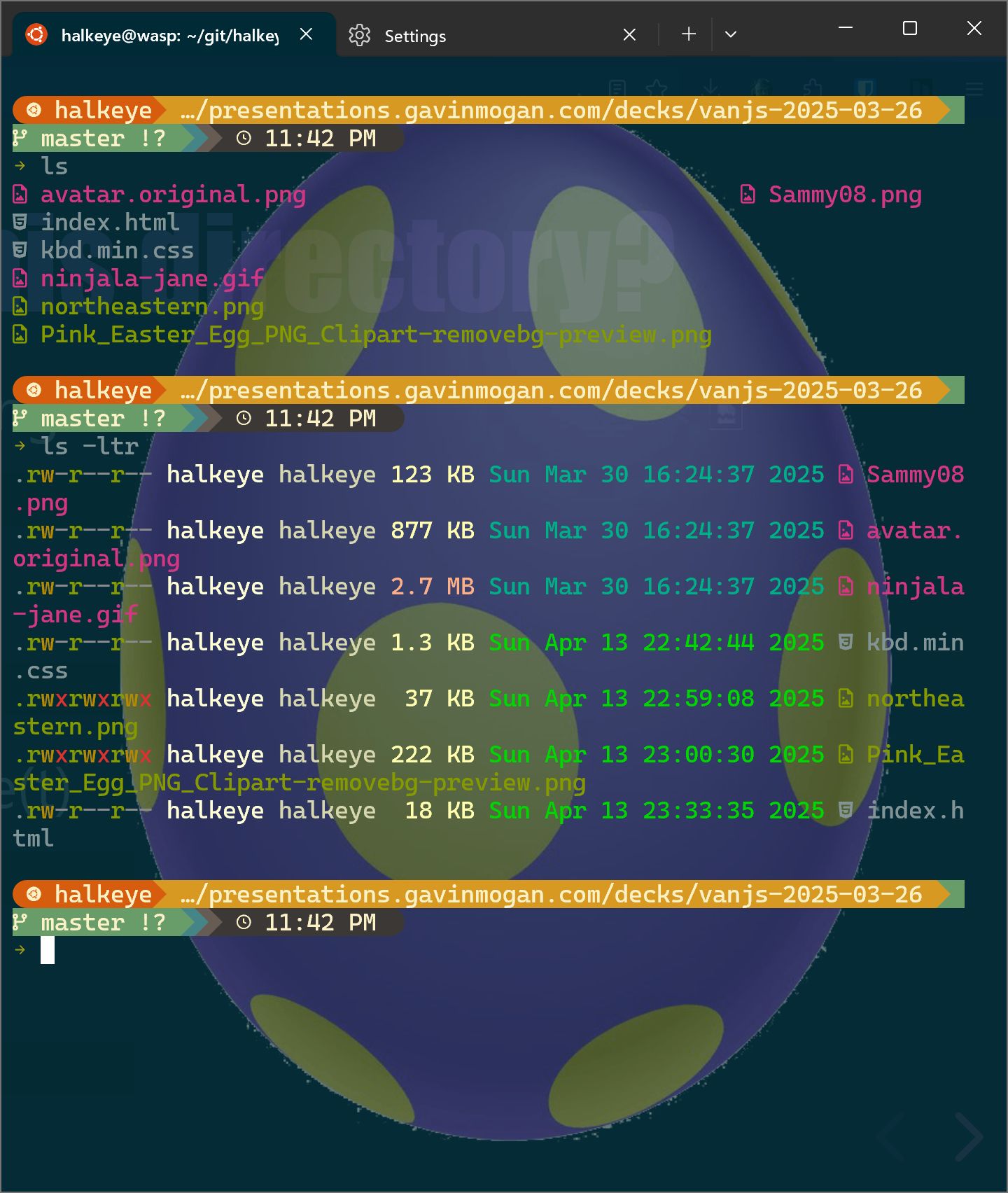

What is in this directory?

Simple Directory Listing

$ ls

Long Listing

$ ls -l

Descending(r) by Time(t)

$ ls -ltr

Hidden files

$ ls -a

Note, I'm using some customizations to make this more colorful.

When am I?

$ TZ=America/Vancouver date

Sun Apr 20 12:00:00 PDT 2025

Note: time command times a command, not time of day

$ time sleep 30s

sleep 30s 0.00s user 0.00s system 0% cpu 28.108 total

When am i? The date command is very useful. I've used it to print out the date before and after a command so i

know how long it takes. There's also the built in time command which can tell you how long a command takes on

your computer.

Testing HTTP

Curl and wget work kinda the same, but under different designs.

Wget is great at downloading files.

$ wget http://i.imgur.com/Ia48QDR.jpgCurl is better at retrieving content.

$ curl https://httpcodes-8pkfp.ondigitalocean.app/json/404Note: probably less true these days

Testing HTTP. Another random set of tools. Most systems will have wget or curl installed. Both will work, but

generally I use wget for downloading files, and curl for hitting APIs.

What's happened recently?

Head will give you the first n lines

Tail will give you the last n lines

Common Options

-f will keep tailing

-F will restart tailing if file is truncated

-n #num# will only print out #num# number of lines

Next up. Tail and Head. Tail will take a stream, commonly a file, and give you the last few lines. Head will

do the opposite, the start of the steam.

Redirection output

Output to file

$ echo "hi" > file.txtErrors to file

$ curl http://fake.server 2> errors.txtOutput to file and console

$ echo "hi" | tee file.txt

Redirection is very useful start for less manual commands. A single arrow to the right will take the output of

a command and write it to a file. By default this is stdout. You can also be specific, like stream number 2,

which is errors. Lastly there's the tee command which lets you save the output and print the output. I will

talk about pipes soon.

Redirection input

Input from file

$ mysql < import.sql

Next up is the redirect the other way. A single arrow to the left will let you take the contents of a file and

use it as input for a command. Mysql for example, you can send the contents of a sql file and run them with

the mysql command.

Wildcards (glob)

Will look find all directories that have a logs directory underneath it.

$ tail -F ~/Develop/*/logs/development.logWill find all log files under all directories that have a log directory (one level deep)

$ tail -F ~/Develop/*/logs/*.logWill find all log files under all directories that have a log directory (infinite levels deep)

$ tail -F ~/Develop/**/logs/*.log

So in shell, there's actually a lot of different kinds of wildcards. At the most basic level, the star is easy

to understand. A star will essentially just match things. So star dot log will match anything that ends with

dot log. Star by itself will match anything. This only works in a single directory though. You also have the

ability to use double stars. They will match any number of sub directories. This is all called glob patterns

if you want to look them up more.

Chaining

true && echo truefalse || echo falsefalse; echo always

So at a basic level, bash has boolean operators live javascript. So you can do two ampersands and only run the

second command if the first one succeeds. Two ors if it fails, and a semicolon that just splits up two

statements. And yes, before someone corrects me to say its not actually success based, I'm not going cover

error codes today.

if and while statements

if true; then echo "its-a-me-truethy"; fiwhile true; do echo "its-a-me-truethy"; donefor i in a b c; do echo $i; done

Bash does have a lot of control statements. The ones I use the most are really if, while and for. I'll be

covering for in detail in later slides. And if shouldn't really be a surprise to people. While loops are

actually really useful in bash. The one i've seen the most is wait for something to startup. So while sql

server isn't running, sleep and check again.

Serious wildcards

Find all directories

$ find -type dFind all files

$ find -type fFind all files ending in log

$ find -name '*.log' # find all files ending in .log

$ find -name '*.log' -exec ls {} \; # executes a command for each file

$ find -name '*.log' -exec ls {} + # appends all files to one command

Run something in a directory with logs

$ find /var/log -name '*.log' -execdir pwd \;

Find can do a crazy amount of Stuff. The most common usages for me are finding files or directories with

specific names, and running commands on the files you found. While researching this, I have found there's a

new execdir which lets you run a command in the directory containing the files.

Create Edit Update Destroy

cat <file> # outputs contents

tac <file> # outputs contents in reverse order

less <file> # outputs content (controlled)

rm <file> # Remove a file

rmdir <dir> # Remove an empty directory

mkdir <dir> # Create a directory (mkdir -p as bonus)

# Create all the directories required to make the full path

# (and doesn't error if already exists)

mkdir -p <dir/subdir/subdir2>

touch <file> # update timestamp/create empty file

Okay, just before I start to show you all how to combine things, I have the final list of things I couldn't

figure out how to put elsewhere. Cat takes one or more files and outputs them. Great for looking at logs or

source code. Tac does the same thing but in reverse order. I've not once ever found a use for this knowledge,

but now you all have it to. Less ad More will output files, but let you control the output, usually one page

at a time. rm and rmdir remove files and directories. mkdir lets you create a directory, with dash -p super

useful to make sub directories too. And lastly touch. Touch will create empty files, as well as update the

last updated time. I mention all these because its often useful for the more advanced pipes and loops.

Power of pipes

With a few combos you can do anything

Pipes is where you can start combing things you've learned so far. Essentially they work left to right. First

command pipe second command will take the output of the first one, and input it to the next command.

Grep and Awk Can Do anything

$ grep /favicon.ico access.log

# long output

$ grep /favicon.ico access.log | awk '{print $17}'

AppleWebKit/537.36

$ grep /favicon.ico access.log | awk -F'"' '{print $6}'

Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/40.0.2214.111

Safari/537.36

For example. Lets say you have a web server access log. It has a line every time someone hits your server. You

don't want to look through every line yourself, thats crazy. But you can narrow it down and look for anyone

grabbing the fav icon. Thats still a lot of output. but from there, we can just grab one field. By default awk

splits on spaces, so you can just grab part of the user agent, but with just one flag, you can grab the entire

user agent.

If you pipe that into sort and uniq, you can actually start doing a bit of analysis, like finding out how many

unique browsers hit your server and grabbed the favicon.

For Loops

$ for i in gavin likes pie; do mkdir $i; done

$ ls -l

drwxr-xr-x 2 1000 1000 4096 Apr 15 04:23 gavin

drwxr-xr-x 2 1000 1000 4096 Apr 15 04:23 likes

drwxr-xr-x 2 1000 1000 4096 Apr 15 04:23 pie

Next up is for loops. For takes in a variable, and then a list of values. So for this example, I make 3

directories. Gavin. Likes. Pie....cause I do. I've used things like this when I want to run the same command

on multiple servers. But for can take in data from other sources too

For Loops - wildcards

$ for i in *; do mv $i $i.bak; done

$ ls -l

drwxr-xr-x 2 1000 1000 4096 Apr 15 04:23 gavin.bak

drwxr-xr-x 2 1000 1000 4096 Apr 15 04:23 likes.bak

drwxr-xr-x 2 1000 1000 4096 Apr 15 04:23 pie.bak

And one option for that is just straight up shell wildcards. So for example, I can say for each file in this

directory, rename the file to file dot bak. Yes there are better ways to do it, but they don't show off the

power of for loops.

For Loops - subshells

$ for i in $(seq 1 10); do echo $i; done

1

2

3

4

5

6

7

8

9

10

You can also use sub shells. Sub shells are wrapped in dollar sign and parentheses. Seq is a cool command that

just outputs numbers from start to finish. There's actually a bunch of options like padding it with 0s, but

not really the point here.

Search and Replace

Sed, perl, python, etc

I prefer perl pie

$ echo "Gavin likes pie" > file.txt

$ cat file.txt

Gavin likes pie

$ perl -pi -e 's/Gavin/Bibi/' file.txt

$ cat file.txt

Bibi likes pie

Real world example

for i in $(git ls-files -m); do

jsonlint $i && git add $i;

done

Real example time.

I was making changes to a lot of json files, and when i do that, i often will forget to add or remove a comma.

Instead of committing, creating a pr, having tests fail, and repeating, I decided to only stage the files in

git if they were valid.

:click:

So I created a little for loop that looped through all git files that were modified. Run them through

jsonlint. And if they are considered valid, git add them.

After that I could look at files that were left and go in and fix them.

Just shows that you can build on the basics real quick.

Real World Example - Find and Replace multiple files

grep -r onedr0p . | \

awk -F: '{print $1}' | \

xargs perl -pi -e 's#ghcr.io/onedr0p#ghcr.io/home-operations#g'

for the last few weeks I've been trying to remember to write down various oneliners I end up using over and

over again. Every time I do there's more and more things I felt I should have covered.

I included this one because xargs is so powerful.

So in this case. Search all files for the phrase onedrop.

split on the colon so I just get the filename.

then using xargs, pass each filename into perl pie.

Non standard tools - JQ

https://jqlang.org/

$ curl -qs 'https://jsonplaceholder.typicode.com/todos/1' | jq '.'

{

"userId": 1,

"id": 1,

"title": "delectus aut autem",

"completed": false

}

$ curl -qs 'https://jsonplaceholder.typicode.com/todos/1' | jq '.id'

1

$ curl -qs 'https://jsonplaceholder.typicode.com/todos/1' | jq '.completed'

false

JQ is something I probably end up using every day in one way or another. I would say the most common is to

pretty print json text. You can, as shown here, curl and pipe to jq.

Next most common for me has to be pulling out specific info. It can handle properties, as shown, but so much

more, like arrays both numeric index, or filtering by other criteria.

Its also really great at producing content that can go into a for loop.

Non standard tools - gron

https://github.com/tomnomnom/gron

$ curl -qs 'https://jsonplaceholder.typicode.com/todos/1' | gron

json = {};

json.completed = false;

json.id = 1;

json.title = "delectus aut autem";

json.userId = 1;

Gron is a tool I really want to use more. It tries to make json more grepable by flattening everything out.

Eg curl + jq + gron

$ curl -qs 'https://jsonplaceholder.typicode.com/todos' | jq '[.[] | select(.completed == true)][0]' | gron

json = {};

json.completed = true;

json.id = 4;

json.title = "et porro tempora";

json.userId = 1;

so you can start combining them. Filter through jq, then flatten with gron.

The End

https://m.do.co/c/7d6859326b6a

As promised. If you want to try things out in a safe environment, feel free to use my referral code and create

a droplet. If you already have an account, come talk to me or rodrigo and we can help you out.

I also had to cut so many things to make room for other things I really wanted to bring up such as git

techniques, or fzf, or even the jump command. Some of which are fully fleshed out slides, some are just point

form notes. Feel free to poke around the deck or ask me more.

Extra Stuffsssss

Slides that were already done but decided against bringing them up

Debugging

Run a script in debug mode

$ bash -x script.shEnable debugging right now

$ set -x

SSH

What are ssh keys?

Why would you want them?

How do you use them?

man ssh_config

Whats going on?

ps xf -A (My Favourite)

ps aux (Very portable)

More w's with ps = more wide

ps auxwww

pstree

top

Home Directories

$ cd

/home/gavinm

$ cd ~

/home/gavinm

$ cd $HOME

/home/gavinm

$ cd ~halkeye

/home/halkeye

How to get there? - Apple

I'm one of those weird backend developers that uses windows full time, and its been years since I used a

apple, but from what I read online, most people are pretty comfortable with the built in Terminal app. When I

was a apple user, iterm2 was the goto app.

--

I have a slide in a bit that covers ones that work on all oses.